Most software updates succeed quietly.

But the rare ones that fail? They become legends inside engineering teams — and warnings written into playbooks forever.

Here are three real case studies where an update didn’t just break software… it exposed how fragile scale can be.

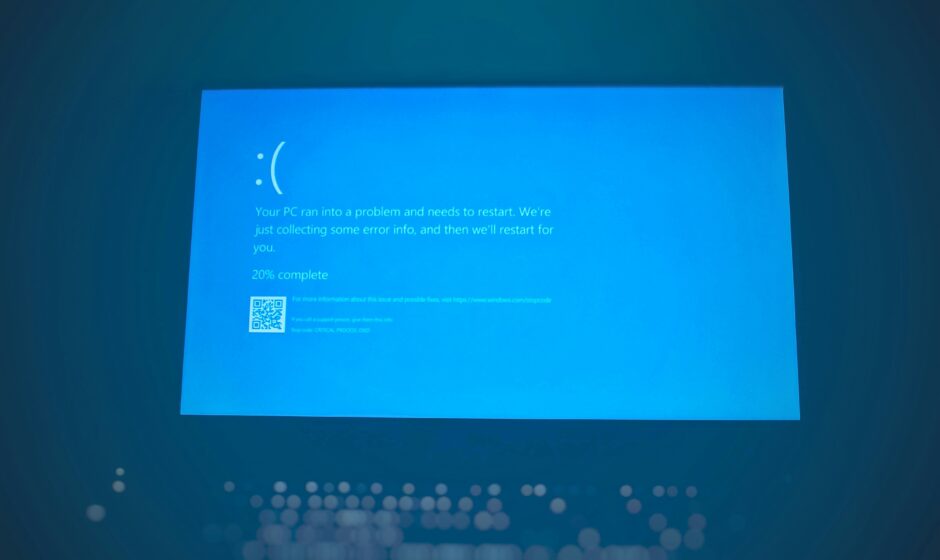

Case Study 1: The Windows Update That Deleted People’s Files

In 2018, Microsoft released a Windows 10 update that had passed internal testing, staged rollouts, and validation checks.

Then reports started coming in.

Photos gone.

Documents missing.

Entire folders — deleted.

What happened?

A bug in the update’s file-migration logic mistakenly removed user files under specific folder configurations. It was rare — but at Windows scale, “rare” still means thousands of people.

How long it took to fail?

- Hours after rollout reached broader users

- Microsoft paused distribution within days

How it was fixed?

- Immediate global halt

- Emergency rollback

- Public apology (rare for update issues)

- New rollout only after months of additional validation

Lesson learned!

Data loss is the unforgivable sin of updates.

After this incident, Microsoft dramatically expanded canary testing scenarios and filesystem simulations.

Turns out “Have you tried turning it off and on again?” doesn’t help when the update already turned your files into memories.“

Case Study 2: The Facebook Update That Took Down the Internet (Almost)

In October 2021, a routine configuration update inside Facebook’s infrastructure triggered one of the largest outages in internet history.

Instagram, WhatsApp, Messenger — all gone.

What happened

A configuration change unintentionally withdrew Facebook’s BGP routes from the internet. In simple terms: Facebook accidentally told the internet, “I don’t exist.”

Internal systems failed too:

- Engineers couldn’t access tools

- Badge systems stopped working

- Physical data centers required manual entry

How long it took to fail

- Minutes after deployment

- Outage lasted ~6 hours globally

How it was fixed

- Manual reconfiguration at data centers

- Physical access by network engineers

- Gradual DNS and routing restoration

Lesson learned

Infrastructure updates can lock you out of your own recovery tools.

Facebook later redesigned change controls so updates cannot isolate core access systems.

Case Study 3: The iOS Update That Killed Cellular Networks

In 2014, Apple released iOS 8.0.1 — a fast-follow update meant to fix bugs.

Instead, it broke something fundamental.

Phones lost:

- Cellular signal

- Touch ID

- Basic connectivity

What happened

The update conflicted with certain hardware configurations, particularly on newer iPhone models. A bug in low-level firmware interaction slipped past pre-release testing.

How long it took to fail

- Within hours of release

- Social media lit up instantly

How it was fixed

- Apple pulled the update the same day

- Rolled users back to iOS 8.0

- Released a corrected version days later

Lesson learned

Hardware + software updates multiply risk.

Apple expanded device-matrix testing and strengthened staged rollouts across models.

What All Three Failures Have in Common

Despite different causes, the pattern is clear:

- The updates were technically correct in isolation

- Failures appeared only at real-world scale

- Rollback speed mattered more than perfection

Modern update systems are now designed with one assumption:

‘Failure is inevitable. Recovery must be instant.‘

Why These Failures Made Updates Safer for Everyone

Because of incidents like these, today’s updates include:

- Smaller rollout percentages

- Kill switches and feature flags

- Automatic anomaly detection

- Safer rollback paths than deployment paths

Ironically, the worst failures made the ecosystem stronger.

Read This Twice (Marking it as important)

Great software isn’t defined by how rarely it breaks, but by how quietly it fixes itself when it does. At scale, stability is not perfection — it’s preparation.