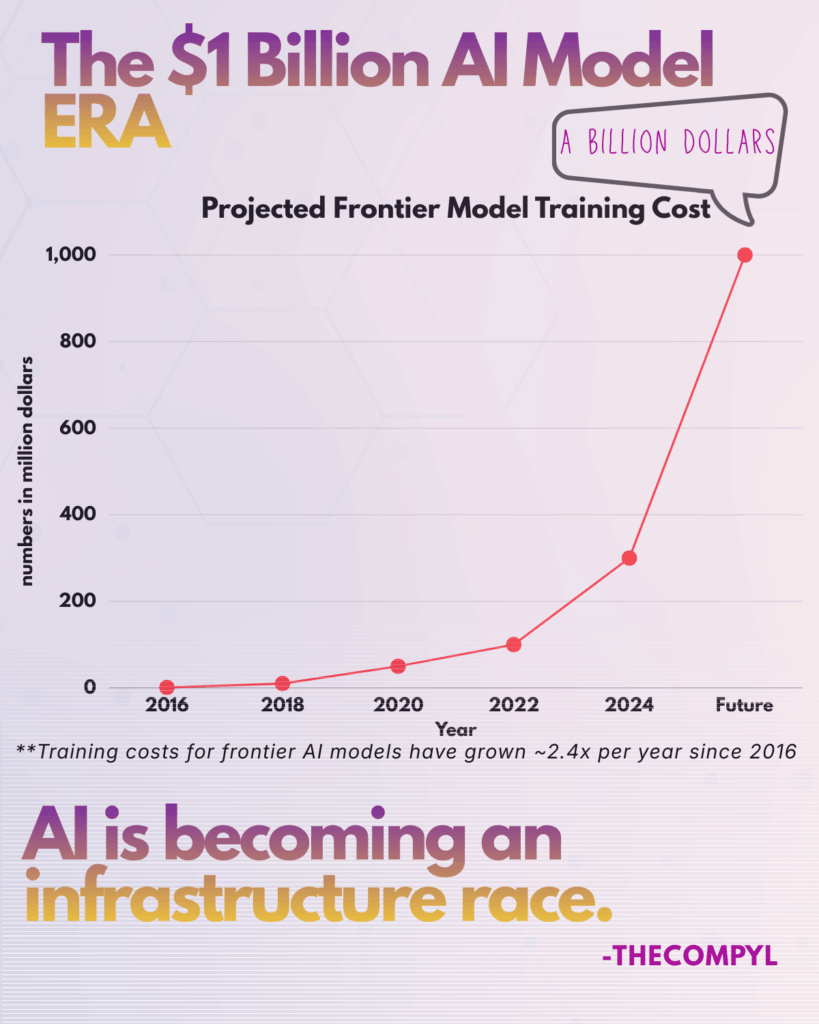

Training frontier AI models is becoming so expensive that only a few companies may be able to compete.

For Busy Readers

• Training costs for frontier AI models have increased rapidly over the past decade.

• Some projections suggest the first $1B training runs could arrive within the next few years.

• As costs rise, the AI race may increasingly favor companies with massive compute and capital.

The Quiet Explosion in AI Training Costs

In the early days of modern AI, training large models was relatively inexpensive by today’s standards.

Around 2016, training a leading AI model could cost roughly $1 million. At the time, that seemed like a significant investment.

But the economics of frontier AI have changed dramatically.

By the early 2020s, training costs for cutting-edge models were already climbing into the tens and hundreds of millions of dollars. Each new generation of models required larger datasets, more GPUs, and longer training runs.

The result: an exponential rise in the cost of building state-of-the-art AI.

Researchers analyzing the trend estimate that frontier model training costs have been increasing by roughly 2.4× per year since 2016.

If this trajectory continues, the industry may soon see its first $1 billion AI training run.

Why AI Models Are Getting So Expensive

The rising cost of AI training is largely driven by three factors.

1. Model Size

Modern AI models contain hundreds of billions — sometimes trillions — of parameters. Training them requires enormous amounts of compute.

Larger models generally need:

- More GPUs

- Longer training times

- Larger datasets

Each of these factors multiplies cost.

2. Compute Infrastructure

Training frontier AI models often involves tens of thousands of high-end GPUs running for weeks or months.

Cloud infrastructure, networking, storage, and cooling all add to the total cost of training runs.

Companies building frontier models are essentially operating massive temporary supercomputers.

3. The Race for Performance

AI labs are locked in a competition to build the most capable models.

Every new model aims to outperform previous ones across benchmarks and real-world applications. Achieving these improvements often requires scaling up compute dramatically.

This dynamic pushes costs higher with every generation.

The Rise of the Infrastructure Moat

If training costs reach $1 billion per model, the AI landscape could change significantly.

Historically, startups could compete with larger companies by building better algorithms or novel architectures.

But as training costs rise, access to infrastructure becomes the real advantage.

Companies with the deepest resources — including massive GPU clusters, energy capacity, and cloud infrastructure — may dominate frontier AI development.

This is one reason major technology companies are investing tens of billions of dollars into AI data centers.

The AI race is gradually becoming an infrastructure race.

What It Means for the Future of AI

The emergence of billion-dollar training runs doesn’t mean innovation will stop.

Instead, the industry may split into two layers:

Frontier AI Labs

A small number of organizations capable of training the most advanced models.

AI Builders

Thousands of startups and developers building products using existing models through APIs and open-source frameworks.

In this environment, the companies controlling the most powerful models could shape the direction of the entire AI ecosystem.

TheCOMPYL Perspective

The next phase of AI competition may not be decided by who writes the best algorithms.

It may be decided by who can afford the largest training runs.

As training costs climb toward the billion-dollar mark, the future of frontier AI will increasingly depend on three things:

compute, capital, and infrastructure.