For busy readers

- Maia is Microsoft’s in-house AI accelerator chip built for cloud-scale AI workloads

- It’s optimized for inference—where AI models respond to users in real time

- Maia doesn’t replace NVIDIA or AMD; it gives Microsoft control, efficiency, and leverage

So… what exactly is Maia?

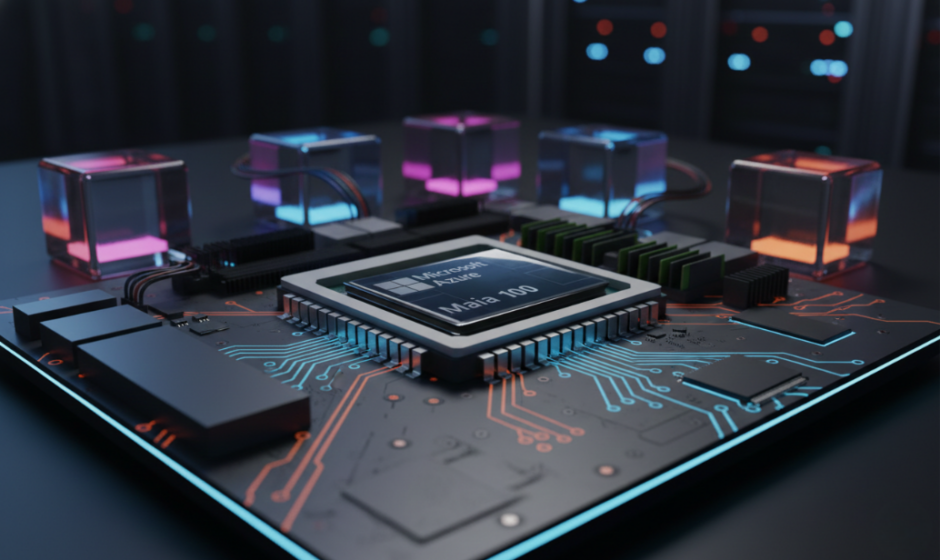

Maia is Microsoft’s custom-designed AI chip family, built specifically for running large AI models efficiently inside Azure data centers.

It’s not a consumer processor.

It’s not a PC chip.

And you won’t find it listed on Amazon.

Maia exists for one reason: to make AI services cheaper, faster, and more predictable at massive scale.

If you’ve used Microsoft Copilot, AI-powered search, or cloud-based AI tools—there’s a good chance Maia is working somewhere in the background.

Why Microsoft decided to build its own AI chip

The AI boom changed the economics of computing.

Running AI models—especially large language models—is expensive, power-hungry, and heavily dependent on external suppliers. NVIDIA GPUs dominate the market, and demand has often outpaced supply.

For Microsoft, that creates three problems:

- Cost volatility

- Supply-chain dependency

- Limited control over optimization

Maia is Microsoft’s answer—not to escape partners like NVIDIA, but to reduce risk and regain balance.

What Maia is designed to do (and what it isn’t)

Inference, not training

Maia is primarily built for AI inference—the moment when a trained model generates responses for real users.

This includes:

- Answering questions

- Generating text or summaries

- Making predictions in real time

Training massive models still heavily relies on NVIDIA GPUs. Maia steps in after training—where scale, efficiency, and cost matter most.

Purpose-built, not general-purpose

Unlike GPUs that try to handle many workloads, Maia is optimized for:

- Low-precision AI math

- High-throughput token generation

- Energy-efficient, always-on AI services

This specialization allows Microsoft to squeeze more performance per dollar—and per watt—out of its data centers.

Inside Maia (without getting too nerdy)

Microsoft’s latest generation, often referred to as Maia 200, is built using advanced semiconductor manufacturing and includes:

- Large high-bandwidth memory (HBM) to keep AI models fed with data

- Tensor-optimized compute cores for modern AI workloads

- Custom networking and interconnects for massive Azure clusters

- Tight integration with Microsoft’s software stack, from Azure orchestration to AI frameworks

The result isn’t flashy benchmark wins—it’s operational efficiency at planetary scale.

Where Maia fits in Microsoft’s bigger chip strategy

Maia is part of a broader shift.

Microsoft now operates a heterogeneous silicon strategy:

- NVIDIA GPUs for large-scale training

- AMD CPUs for cloud compute

- Microsoft-designed chips like Maia for optimized AI services

This mix allows Microsoft to:

- Avoid over-reliance on any single vendor

- Match hardware to workload precisely

- Negotiate from a position of strength

In cloud computing, flexibility beats loyalty.

Why Maia doesn’t threaten NVIDIA (yet)

Despite the headlines, Maia isn’t a GPU killer.

NVIDIA still dominates:

- Model training

- Developer ecosystems

- Cutting-edge AI research

Maia simply handles the parts of the AI pipeline where Microsoft can design better economics for itself. Think of it as infrastructure plumbing, not the engine everyone sees.

And in a world where AI demand keeps exploding, more chips is the only realistic answer.

Why this actually matters

Maia represents a quiet but important shift in AI infrastructure.

It signals that:

- AI is now core infrastructure, not an experiment

- Cloud providers can’t rely on off-the-shelf hardware forever

- The future of computing is customized, specialized, and invisible

The biggest AI breakthroughs won’t always come from new models—but from the systems that make them affordable to run.

The strategic takeaway

Maia isn’t about showing off.

It’s about survival, scale, and control.

Microsoft isn’t trying to win the chip wars—it’s making sure it never loses access to intelligence.

The most powerful AI chips won’t sit on your desk.

They’ll sit quietly in a data center, answering billions of questions—without ever asking for attention.